What Is Artificial Intelligence?

Unit 1: Foundations of Artificial Intelligence — Section 1.1

When you hear "artificial intelligence," what comes to mind? Robots? Self-driving cars? ChatGPT? AI encompasses all of these — and defining what it actually is turns out to be surprisingly difficult.

Start with this Crash Course overview of AI definitions, learning from data, and current applications.

Four Ways to Define AI

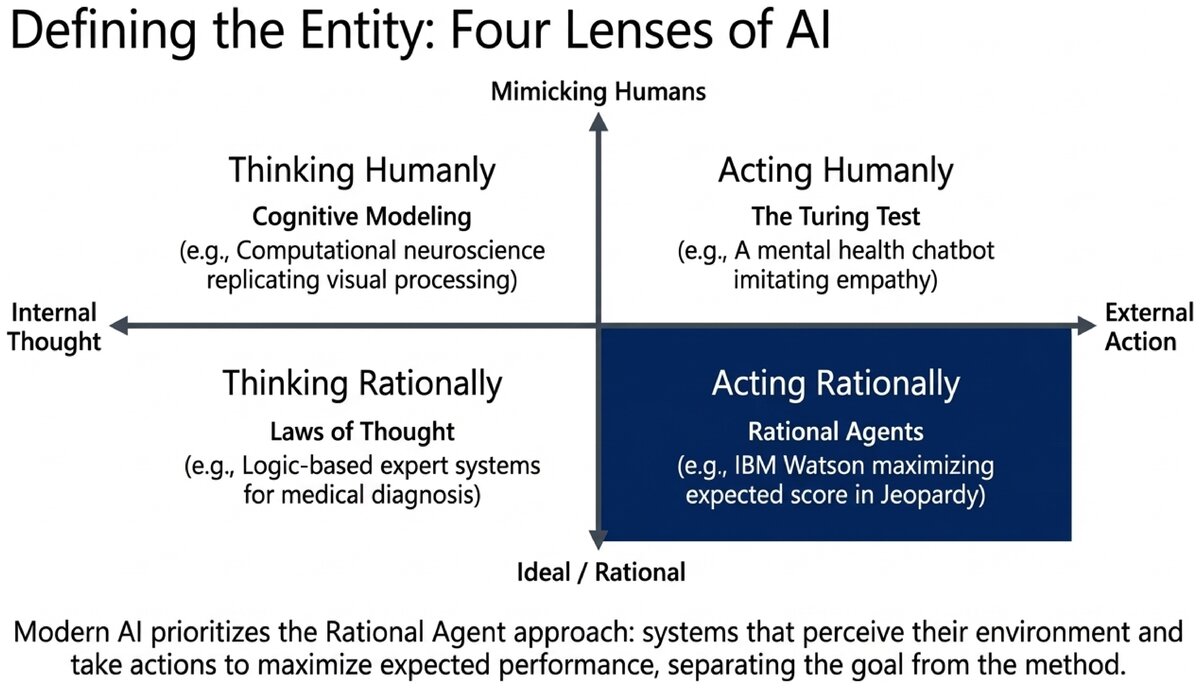

Researchers have approached the question "What is AI?" from four different directions. These approaches fall along two dimensions.

Human vs. Rational: Should AI systems mimic how humans think and act? Or should they seek the ideal (rational) way to think and act?

Thought vs. Action: Should we focus on internal thought processes? Or on external behavior and actions?

Combining those two dimensions produces four quadrants, each representing a distinct school of thought:

| Human-like | Rational (Ideal) | |

|---|---|---|

Thinking |

Thinking Humanly |

Thinking Rationally |

Acting |

Acting Humanly |

Acting Rationally |

Each quadrant has shaped AI research in different eras, and all four remain relevant today.

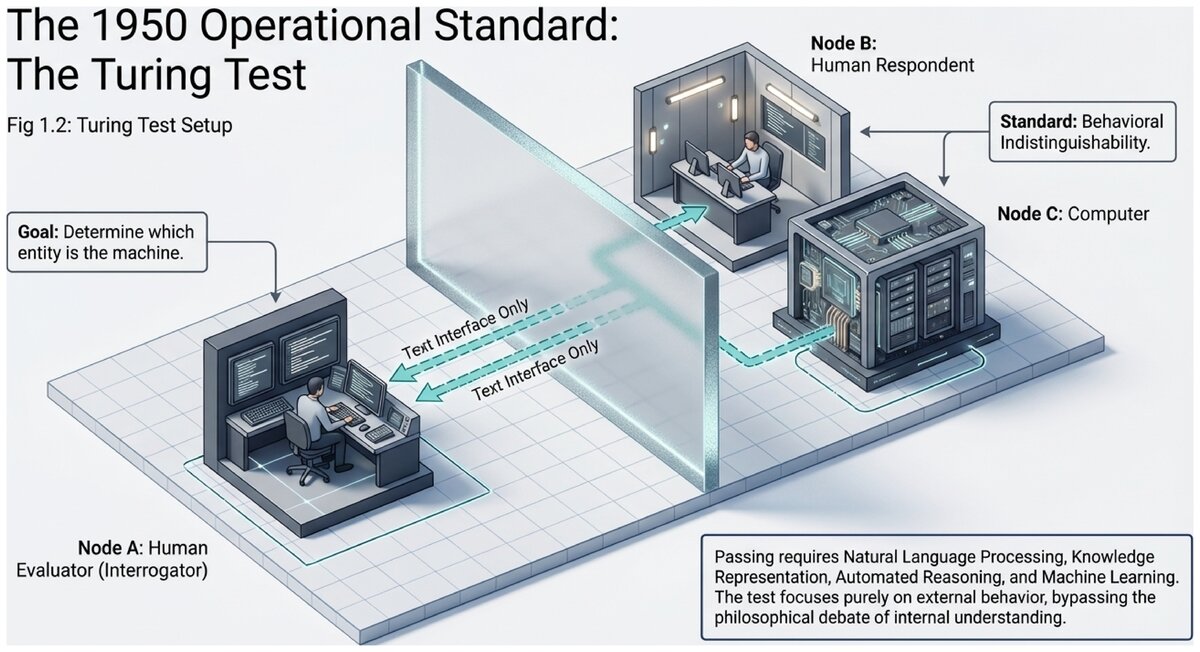

1. Acting Humanly: The Turing Test Approach

In 1950, mathematician Alan Turing proposed an operational test for machine intelligence: if a human evaluator conducts a text conversation with both a human and a machine and cannot reliably tell the difference, the machine should be considered intelligent. This is the Turing Test.

This TED-Ed video explains the Turing Test and what it reveals — and conceals — about intelligence.

To pass the Turing Test, a computer would need:

-

Natural language processing — the ability to communicate in human language

-

Knowledge representation — a way to store what it knows about the world

-

Automated reasoning — the ability to draw conclusions and answer questions

-

Machine learning — the ability to adapt to new information and detect patterns

- Turing Test

-

A behavioral test of machine intelligence, proposed by Alan Turing in 1950, in which a human evaluator attempts to distinguish between a human and a machine through text conversation alone. If the evaluator cannot reliably tell them apart, the machine is considered to have passed.

The Turing Test has been enormously influential, but also controversial. Critics note that a system could pass by imitating humans without actually understanding anything — a concern known as the Chinese Room argument (philosopher John Searle, 1980). Others argue that behavioral indistinguishability is precisely the right standard: if it acts like it understands, what more could we ask for?

2. Thinking Humanly: The Cognitive Modeling Approach

This approach tries to build AI systems that think the way human minds actually work. It requires two things: a theory of how human cognition operates (from psychology and neuroscience), and a program that implements that theory.

Example: In the 1950s and 1960s, Herbert Simon and Allen Newell built the General Problem Solver, an AI program that attempted to solve problems by mimicking the mental steps humans reported taking. They validated their model by comparing the program’s behavior to recorded human problem-solving sessions.

- Cognitive Modeling

-

An approach to AI that attempts to replicate the internal mental processes of human cognition — how people perceive, reason, and remember — rather than just the external behavior.

Cognitive modeling has contributed to our understanding of both human intelligence and AI limitations. Humans use shortcuts, heuristics, and intuition that do not always align with pure logic — and modeling those shortcuts has proven useful in building more robust systems.

3. Thinking Rationally: The "Laws of Thought" Approach

This tradition, rooted in Aristotle’s logic, focuses on correct reasoning according to formal rules. If we can fully codify the laws of logical thought — what follows from what — perhaps we can build machines that reason flawlessly.

Gottfried Leibniz (17th century) dreamed of a "calculus ratiocinator," a machine that could settle any dispute through pure logic. In the 19th century, George Boole and Gottlob Frege developed the formal logical systems that make this dream technically expressible.

The challenge: Not all intelligent behavior is purely logical. How do you reason logically about uncertainty? "There is probably a 70% chance of rain" does not follow the same rules as "All bachelors are unmarried." Real-world intelligence requires handling incomplete, conflicting, and probabilistic information.

- Formal Logic

-

A system of rules for valid reasoning that guarantees correct conclusions from true premises. Classical propositional and predicate logic are the foundations of this approach to AI, though handling uncertainty requires extensions such as probability theory.

4. Acting Rationally: The Rational Agent Approach

The rational agent approach is the dominant paradigm in modern AI. A rational agent is anything that perceives its environment and takes actions to achieve the best expected outcome given its goals. It doesn’t need to think like a human or reason like a logician — it just needs to make good decisions.

This approach is more general than the others, mathematically precise, and directly connected to how we build real AI systems today.

Crucially, the rational agent framework separates the goal from the method. A rational agent chooses whatever action best serves its objectives — whether that means searching through logic, pattern-matching from data, or consulting a lookup table.

- Rational Agent

-

An entity that perceives its environment through sensors, processes that information, and produces actions through actuators in order to maximize its expected performance measure. Modern AI systems — from recommendation engines to self-driving cars — are designed and evaluated as rational agents.

- Artificial Intelligence

-

The field of computer science concerned with designing and building agents that perceive their environment and take actions to achieve goals — whether by acting humanly, thinking humanly, thinking rationally, or acting rationally.

Which Approach Is Best?

There is no single right answer. The four approaches suit different purposes:

-

Building useful tools? Rational agents work well — maximize expected performance.

-

Understanding human cognition? Cognitive modeling is essential — replicate mental processes.

-

Creating companions or social AI? Acting humanly matters — conversation and empathy.

-

Proving things rigorously? The laws-of-thought approach provides formal guarantees.

In this course, we primarily adopt the rational agent perspective, because it is the most tractable, general, and mathematically well-founded. However, the other approaches appear throughout — especially when we discuss ethics, language, and human-AI interaction.

Matching AI Systems to Approaches:

Consider four real systems:

-

IBM Watson at Jeopardy — found optimal answers from a large database to maximize score. This is acting rationally (rational agent — focused on best outcomes).

-

A mental health chatbot — designed to feel like talking with a supportive human. This is acting humanly (Turing Test approach — mimicking human conversation).

-

A computational neuroscience model — replicates visual processing in the primate cortex. This is thinking humanly (cognitive modeling — reproducing brain processes).

-

A logic-based expert system — applies formal rules to reach medical diagnoses. This is thinking rationally (laws of thought — formal logical reasoning).

Does seeming human really mean something is intelligent? Could a system fool a Turing Test evaluator without genuinely understanding anything it says?

Consider: If a calculator gives the correct answer to every arithmetic question you ask it, is it "doing math" — or just retrieving pre-computed results? Where would you draw the line between intelligence and very clever pattern matching?

Test your understanding of the four definitional approaches to AI.

Now that you understand what AI is and the different lenses through which researchers define it, the next section traces how the field developed over seventy years of breakthroughs, setbacks, and reinvention.

Original content for CSC 114: Artificial Intelligence I, Central Piedmont Community College.

This work is licensed under CC BY-SA 4.0.