A Brief History of Artificial Intelligence

Unit 1: Foundations of Artificial Intelligence — Section 1.2

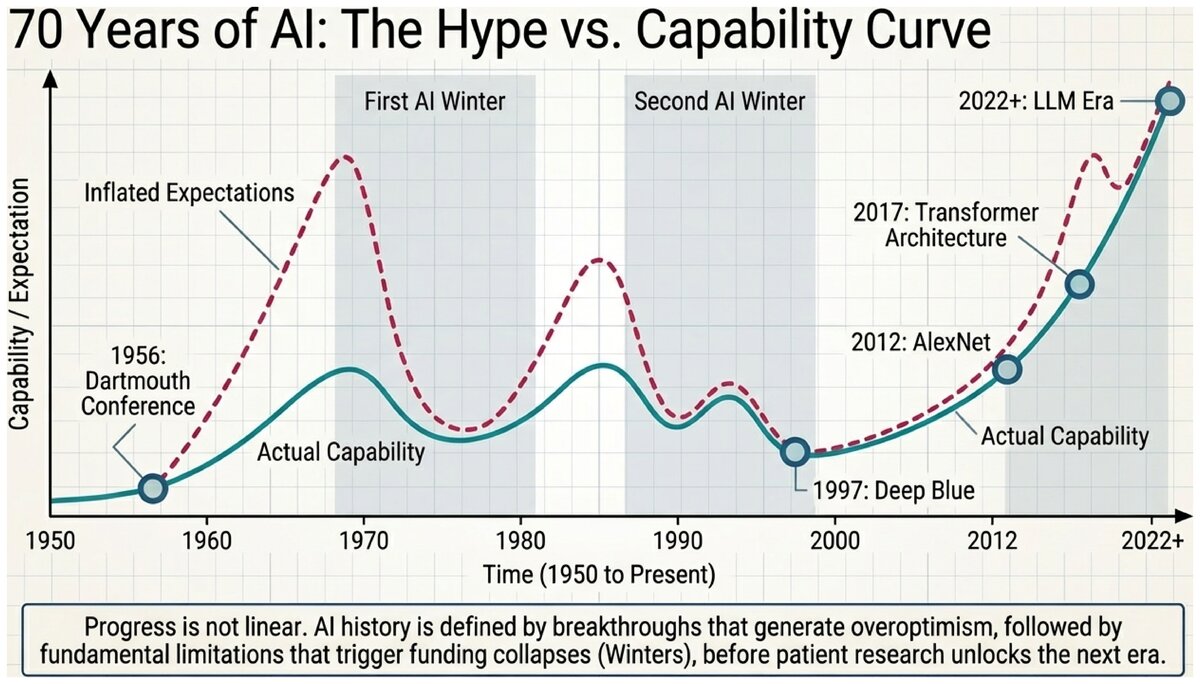

AI’s history is a story of brilliant ideas, inflated expectations, harsh setbacks, and genuine breakthroughs. Understanding this history helps us appreciate both how far the field has come and why certain problems remain stubbornly difficult.

This Crash Course episode covers AI winters, computing power evolution, and the big data revolution. Start at 5:15 for the historical section.

The Birth of AI: 1943 — 1956

The roots of AI reach back to several converging lines of work in mathematics, logic, and neuroscience.

1943: McCulloch and Pitts publish the first mathematical model of a neuron, showing that networks of simple units could in principle compute any function. This was the first formal argument that intelligence could be mechanized.

1950: Alan Turing publishes "Computing Machinery and Intelligence," proposing the Turing Test and arguing that the question "Can machines think?" can be given a meaningful operational answer.

1956: The Dartmouth Conference brings together researchers John McCarthy, Marvin Minsky, Claude Shannon, Herbert Simon, Allen Newell, and others to spend a summer exploring "thinking machines." McCarthy coins the term artificial intelligence. The conference is retrospectively considered the founding event of AI as an academic discipline.

- Dartmouth Conference

-

A 1956 summer workshop at Dartmouth College, organized by John McCarthy and Marvin Minsky, at which the term "artificial intelligence" was coined and the field formally established as a research program.

Simon and Newell predicted in 1958: "Within ten years a digital computer will be the world’s chess champion." The actual milestone took until 1997 — nearly four decades later. Early AI researchers consistently underestimated the difficulty of general reasoning and the amount of world knowledge required to excel at even narrow tasks.

Early Enthusiasm: 1952 — 1969

The 1950s and 1960s produced remarkable early successes that seemed to confirm that general AI was just around the corner.

1952: Arthur Samuel’s checkers program learned to play better than its creator through a form of self-play, one of the first demonstrations of machine learning.

1958: Frank Rosenblatt’s Perceptron showed that a simple mathematical model of a neuron could learn to classify inputs — a harbinger of modern neural networks.

1964: ELIZA, created by Joseph Weizenbaum at MIT, could mimic a psychotherapist well enough to fool some users into believing they were talking to a human. Weizenbaum was disturbed by how readily people attributed understanding to a system that had none.

1966: SHRDLU could understand and execute natural language commands in a simulated blocks world: "Pick up the big red block and put it on the small green cube."

Generous funding flowed from the U.S. Defense Advanced Research Projects Agency (DARPA), and researchers made confident predictions about imminent general AI.

- ELIZA

-

An early natural language processing program (1964) created by Joseph Weizenbaum that simulated a Rogerian psychotherapist by reflecting users' statements back to them. ELIZA demonstrated both the potential and the danger of anthropomorphizing simple programs.

Reality Check: 1966 — 1973 (First AI Winter)

The limits of these early systems became apparent within a decade. Three fundamental problems emerged:

Combinatorial explosion: Early programs worked on tiny "toy" problems but could not scale. Real-world problems had too many possibilities to enumerate, and the search space grew exponentially.

Lack of world knowledge: Programs could reason from given facts but did not know basic things about the world. They lacked the common-sense knowledge that humans acquire through childhood experience.

The perceptron limitation: In 1969, Minsky and Papert’s book Perceptrons proved that single-layer neural networks could not learn some simple patterns (such as XOR). Funding for neural network research largely dried up.

The result was the first AI winter — a period of reduced funding, dashed expectations, and public skepticism toward AI claims.

- AI Winter

-

A period of reduced funding and interest in AI research, typically following a cycle of over-promising and under-delivering. Two major AI winters occurred: the first in the early 1970s, the second in the late 1980s.

Expert Systems Boom: 1969 — 1986

AI found new life through knowledge-based systems. The key insight was simple: intelligence requires knowledge. So researchers built systems with extensive domain expertise encoded as rules.

Two landmark expert systems:

DENDRAL (1965-1972): Diagnosed molecular structures from mass spectrometry data, matching or exceeding human expert performance. It was among the first programs to demonstrate that a computer could reach expert-level conclusions in a specialized domain.

MYCIN (1972-1980): Diagnosed blood infections and recommended antibiotic treatments. Its accuracy of approximately 69% exceeded the 65% accuracy of senior physicians at Stanford — the first clear demonstration that AI could outperform domain experts in a medical context.

By the 1980s, corporations had invested billions in expert systems for medical diagnosis, equipment troubleshooting, financial analysis, and manufacturing control.

But problems emerged:

-

Building an expert system required months or years of interviewing human experts to extract and encode their knowledge — an expensive and error-prone process.

-

Systems were brittle: they failed unpredictably when confronted with situations outside their rule base.

-

Maintenance was expensive: updating rules when the domain changed required expert programmers.

A second AI winter began around 1987 as companies pulled back from costly systems that did not deliver the promised returns.

- Expert System

-

An AI program that uses a knowledge base of human-expert-derived rules and an inference engine to solve problems in a specific domain. Expert systems were the dominant AI paradigm of the 1970s and 1980s before being largely superseded by machine learning approaches.

The Probabilistic Revolution: 1987 — 2000

Researchers recognized that uncertainty is not an edge case — it is fundamental to almost every real-world decision. Rather than brittle rule-based systems, they built probabilistic models:

-

Bayesian networks for reasoning under uncertainty (Judea Pearl, 1988)

-

Hidden Markov models for speech recognition

-

Statistical machine learning algorithms that learned patterns from data

This shift proved enormously productive. Speech recognition, for example, was intractable for rule-based systems but became manageable with probabilistic models.

Neural Networks Return: 1986 — 2000

The backpropagation algorithm, described by Rumelhart, Hinton, and Williams in 1986, showed how to train multi-layer neural networks by propagating error signals backward through the network. This revived neural network research, but practical applications remained limited: the networks required enormous computational resources and huge datasets that did not yet exist.

The Big Data Era: 2001 — 2010

The internet created something unprecedented: massive datasets generated by billions of users. Google, Amazon, Netflix, and others showed that learning algorithms trained on billions of examples could dramatically outperform rule-based systems on tasks such as web search, product recommendation, and spam detection.

The key lesson: for many tasks, more data is more valuable than a better algorithm.

The Deep Learning Explosion: 2011 — Present

Three factors converged to produce the modern AI boom:

-

Deep neural networks with many layers could learn rich hierarchical representations.

-

Big data provided the training signal needed to fit those networks.

-

GPU computing made training large networks fast enough to be practical.

2012: AlexNet, a convolutional neural network trained on ImageNet, dramatically outperformed all prior approaches on image recognition, winning the ImageNet Large Scale Visual Recognition Challenge by a wide margin. This result is widely considered the moment deep learning became mainstream.

2016: AlphaGo (DeepMind) defeated world champion Lee Sedol at Go — a game with more possible positions than atoms in the observable universe, long considered a pinnacle of human intuition.

2017: The Transformer architecture (Vaswani et al., "Attention Is All You Need") revolutionized natural language processing, enabling the large language models that followed.

2022-present: ChatGPT, GPT-4, and similar large language models demonstrate remarkable fluency in text generation, code writing, and multi-step reasoning, bringing AI capabilities to widespread public attention.

- Deep Learning

-

A family of machine learning methods based on neural networks with many layers, capable of learning hierarchical representations from raw data. Deep learning underlies virtually every major AI breakthrough since 2012, from image recognition to language generation.

The Pattern of Progress

AI history reveals a recurring cycle:

-

A new technique produces early, impressive successes.

-

Researchers and funders extrapolate to general intelligence — predictions become overoptimistic.

-

Fundamental limitations appear that the technique cannot solve.

-

Funding and interest decline (AI winter).

-

Patient researchers make steady incremental progress.

-

New ideas, data, or computing power unlock the next generation of capability.

Recognizing this pattern should make us simultaneously enthusiastic about current progress and cautious about near-term predictions of human-level AI.

AI has followed boom-bust-boom cycles throughout its history. We are currently in what many call an "AI spring."

Based on what you have read, what do you think could cause a third AI winter? Are there fundamental limits to current deep learning approaches that might trigger another disappointment? Or do you think the current wave is different from previous ones? Be specific about the evidence on both sides.

Test your knowledge of AI history milestones and key concepts.

With a grasp of where AI has been, you are ready to assess where it stands today — what AI can actually do, what it still cannot do, and the critical distinction between narrow AI and the theoretical possibility of artificial general intelligence.

Original content for CSC 114: Artificial Intelligence I, Central Piedmont Community College.

This work is licensed under CC BY-SA 4.0.