What Is Machine Learning?

Unit 8: Machine Learning Foundations (Capstone) — Section 8.1

For seven weeks you have written AI systems that follow explicit instructions — search algorithms that navigate state spaces, logic systems that fire inference rules, probability tables that encode what we already know about a domain. Now consider this question: what if you do not know the rules up front? What if the problem is so complex that no human expert can write them down? Machine learning answers that question by letting systems discover rules directly from data.

See how machine learning differs from traditional programming and why it matters now.

The Core Idea: Learning from Experience

The most quoted definition of machine learning comes from Tom Mitchell (1997):

"A computer program is said to learn from experience E with respect to some task T and performance measure P, if its performance on T, as measured by P, improves with experience E."

— Tom Mitchell, Machine Learning, 1997

Let’s ground that abstraction in the example of email spam filtering.

ML Framework Applied: Spam Filtering

-

Task (T): Classify incoming emails as spam or not spam

-

Experience (E): A database of thousands of emails, each labeled spam or not spam by a human

-

Performance (P): The percentage of emails correctly classified

The system studies the labeled examples and learns patterns — certain words, sender addresses, and formatting choices — that distinguish spam from legitimate mail. Over time, as it sees more examples, its classification accuracy improves. No programmer ever wrote a rule that said "if the subject contains 'FREE MONEY' it’s spam." The system discovered that rule from data.

ML vs. Traditional Programming

The key conceptual shift is the direction of the arrow between data, rules, and output.

| Traditional Programming | Machine Learning |

|---|---|

Rules + Data → Output |

Data + Output → Rules |

Programmer writes explicit rules |

System discovers patterns from examples |

Good when rules are known and stable |

Good when rules are complex or unknown |

Example: payroll calculator, GPS route |

Example: image recognition, spam filter |

In traditional programming, the programmer encodes knowledge. In machine learning, the data encodes knowledge and the algorithm extracts it. The programmer’s job shifts from writing rules to selecting algorithms, curating data, and evaluating results.

Why Introduce ML in Week 8?

Machine learning is not a replacement for everything you have studied — it is the culmination.

ML Connects to Every Prior Unit:

-

Units 3—4 (Search & Optimization): Training a machine learning model is an optimization problem — we search the space of possible model parameters to minimize prediction error. Gradient descent is a form of hill climbing (Unit 4).

-

Units 5—6 (Logic & Knowledge): Decision trees (Section 8.2) learn if-then rules from data. They are automatically constructed knowledge bases.

-

Unit 7 (Probability): Naive Bayes (Unit 7) is a machine learning algorithm. Nearly every ML model outputs a probability estimate: P(class | features). ML is fundamentally probabilistic.

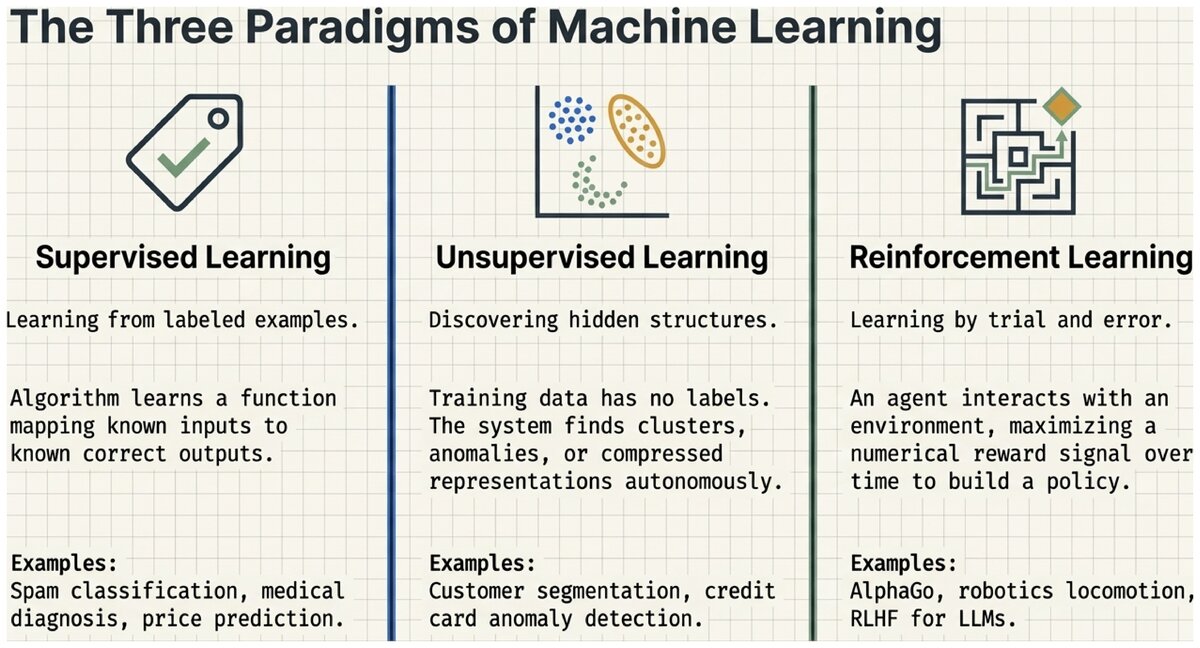

The Three Paradigms of Machine Learning

Machine learning divides into three paradigms based on the type of experience available during learning.

Supervised Learning

In supervised learning, every training example comes with a correct output label provided by a human. The algorithm learns a mapping from inputs to outputs by studying these (input, label) pairs.

Examples: spam classification, medical diagnosis, house price prediction, handwriting recognition.

This is the most mature and widely deployed paradigm, and it is the focus of Sections 8.2 and 8.3.

- Supervised Learning

-

A machine learning paradigm in which the training data consists of labeled examples — each input is paired with the correct output. The algorithm learns a function that maps new inputs to predicted outputs.

Unsupervised Learning

In unsupervised learning, no labels are provided. The algorithm must find structure — patterns, clusters, or compressed representations — in the raw data on its own.

Examples: customer segmentation (grouping shoppers by behavior), anomaly detection (flagging unusual credit card transactions), topic modeling in document collections.

- Unsupervised Learning

-

A machine learning paradigm in which training data has no labels. The algorithm discovers hidden structure, clusters, or patterns in the input data without being told the "correct" answer.

Reinforcement Learning

In reinforcement learning, an agent learns by interacting with an environment. It takes actions, observes the results, and receives numerical rewards or penalties. Over many trials, it learns a policy — a strategy for choosing actions that maximizes long-term reward.

Examples: AlphaGo (defeated human Go champion in 2016), robotics locomotion, game-playing AI, ChatGPT fine-tuning (RLHF).

- Reinforcement Learning

-

A machine learning paradigm in which an agent learns by trial and error, receiving rewards for good actions and penalties for bad ones. The goal is to learn a policy that maximizes cumulative reward over time.

Real-World Applications of Machine Learning

ML is now embedded in nearly every technology domain.

| Domain | Example Applications |

|---|---|

Computer vision |

Medical image analysis, self-driving car perception, facial recognition |

Natural language |

Spam filtering, machine translation, sentiment analysis, chatbots |

Healthcare |

Disease diagnosis from imaging, drug discovery, patient outcome prediction |

Business & finance |

Fraud detection, credit scoring, product recommendations |

Speech & audio |

Voice assistants (Siri, Alexa), speech-to-text, music recommendation |

- Training Data

-

The labeled examples used to fit (train) a machine learning model. The model adjusts its internal parameters to make accurate predictions on this data, then is evaluated on a separate test set of examples it has never seen.

Key Takeaways

Machine learning replaces hand-coded rules with pattern discovery. Instead of telling a computer how to solve a problem, we show it examples of solved problems and let it figure out the pattern. Three paradigms — supervised (labeled examples), unsupervised (find hidden structure), and reinforcement (learn from rewards) — cover the landscape of modern ML. This week focuses on supervised learning: the most widely used and industrially important paradigm.

Think about the AI systems you interact with every day — recommendation feeds, navigation apps, voice assistants.

-

Which paradigm (supervised, unsupervised, reinforcement) do you think each one uses?

-

What would the "training data" look like for a movie recommendation system?

-

What makes machine learning appropriate for that task, as opposed to hand-written rules?

Test your understanding of the three machine learning paradigms.

Based on the UC Berkeley CS 188 Online Textbook by Nikhil Sharma, Josh Hug, Jacky Liang, and Henry Zhu, licensed under CC BY-SA 4.0.

This work is licensed under CC BY-SA 4.0.