Agents and Environments

Unit 2: Intelligent Agents — Section 2.1

Your smartphone navigation app receives GPS coordinates, calculates a route, and tells you when to turn. A chess engine reads the board position and selects a move. A spam filter reads an email and decides where to put it. At first glance these systems seem unrelated — but they all share the same fundamental structure: they are agents.

What Is an Agent?

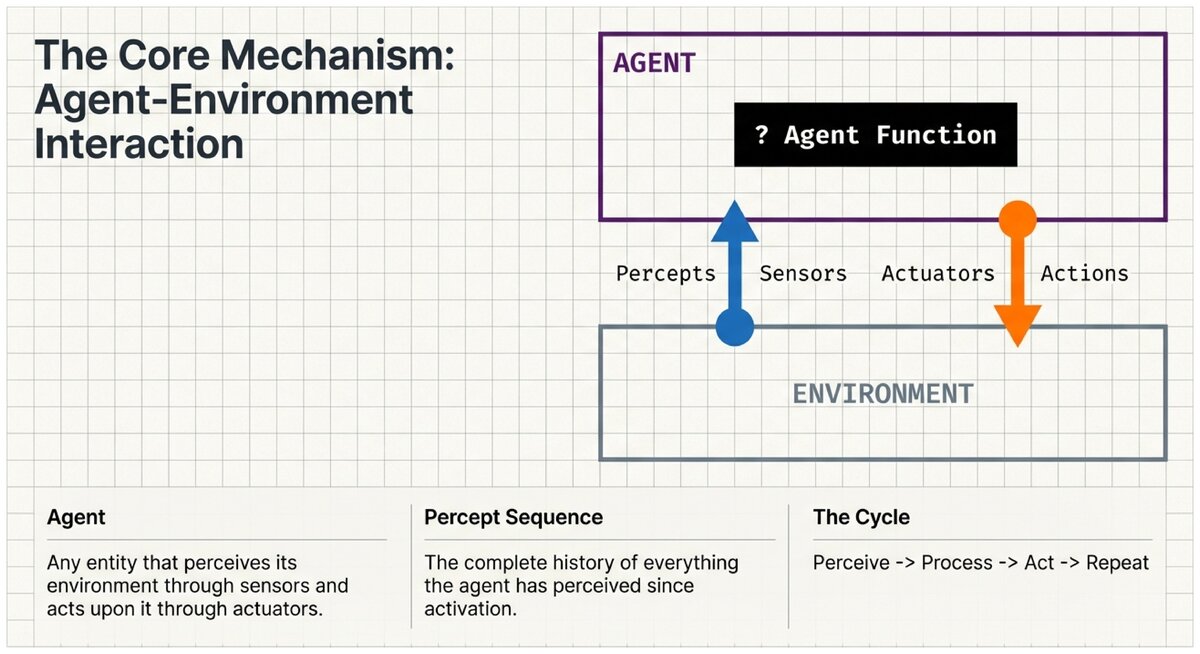

At its core, an agent is anything that can perceive its environment through sensors and act upon that environment through actuators.

That simple definition encompasses everything from thermostats to self-driving cars to large language models. The power of this perspective comes from the precision it brings when you need to analyze or design an intelligent system.

- Agent

-

An entity that perceives its environment through sensors and acts upon that environment through actuators. The term is intentionally broad — it includes software agents (a web crawler), robotic agents (a warehouse robot), and human agents.

- Percept

-

A single perceptual input at one moment in time — what the agent "sees" or "senses" right now. A camera image, a temperature reading, a chess board state, or a line of text are all examples of percepts.

- Percept Sequence

-

The complete history of everything the agent has ever perceived, from the moment it started operating. Agent behavior can depend on the entire percept sequence, not just the latest percept.

Key Components

Every agent is built from five components:

Sensors receive raw information from the environment and pass it to the agent as percepts. A self-driving car’s sensors include cameras, LIDAR (laser range-finding), radar, GPS, and an accelerometer.

Percepts are the inputs the agent actually receives at each moment. The car’s camera delivers a video frame; the LIDAR delivers a point-cloud distance map; the GPS delivers latitude and longitude.

Actions are things the agent can do to affect the environment. The car can steer left or right, apply brake pressure, accelerate, and signal a lane change.

Actuators are the physical or virtual mechanisms that execute actions. The car’s actuators include steering motors, brake hydraulics, and the accelerator system.

The agent function is the mathematical mapping from percept sequences to actions. It describes — in principle — what the agent should do given any possible history of observations.

- Agent Function

-

A mathematical specification that maps every possible percept sequence to an action. The agent function is what we want the agent to do — it can be infinitely large in theory.

- Agent Program

-

The concrete, runnable implementation that approximates the agent function on real hardware. The program must be finite, executable, and fast enough to operate in real time.

The Agent Loop

Every agent operates in a continuous cycle:

The Perceive-Think-Act Cycle:

-

Perceive — sensors deliver the current percept to the agent program

-

Process — the agent program maps the percept (and possibly history) to a chosen action

-

Act — the agent executes the chosen action through its actuators

-

Repeat — the environment changes in response to the action, new percepts arrive

This loop runs continuously as long as the agent is operating. For a self-driving car it runs dozens of times per second. For a chess program it runs once per move.

Agent Function vs. Agent Program

The distinction between the agent function and the agent program is important and often overlooked.

| Agent Function | Agent Program |

|---|---|

Abstract mathematical description |

Concrete implementation |

Maps every possible percept sequence to an action |

Actual code that runs on physical hardware |

Could be infinitely large — one entry for every imaginable history |

Must be finite and efficient enough to execute |

Theoretical ideal |

Practical reality — |

Example: "For every possible chess position, what is the best move?" |

Example: A chess engine with minimax search and evaluation functions |

The agent function is what we would like the agent to do — it is often impossible to specify completely. The agent program is our best approximation of that function that we can actually run. Good AI design closes the gap between these two as much as possible.

Examples Across Domains

Self-Driving Car

-

Sensors: Cameras, LIDAR, radar, GPS, speedometer, accelerometer

-

Percepts: Current camera frame, detected objects, current speed, GPS coordinates

-

Actuators: Steering wheel motor, brake system, accelerator, turn signals

-

Actions: Steer left 5°, apply brake pressure, accelerate, signal lane change

-

Agent Function: Given road conditions, traffic, and destination — choose safe, legal actions to reach the destination

Email Spam Filter

-

Sensors: Email server API, user feedback interface

-

Percepts: Email content (sender, subject, body, attachments), user spam reports

-

Actuators: Email routing system, folder management

-

Actions: Move to inbox, move to spam, block sender

-

Agent Function: Given email characteristics and feedback history — classify as spam or legitimate

Chess-Playing AI

-

Sensors: Board state representation (digital interface)

-

Percepts: Current board position, legal moves, opponent’s last move

-

Actuators: Move execution interface

-

Actions: Any legal chess move

-

Agent Function: Given board position history — choose the move most likely to lead to victory

Rational Agents

Defining an agent is easy. Defining a good agent requires the concept of rationality.

A rational agent is one that does the right thing — selecting actions that maximize its expected performance given the information available from its percept sequence and any built-in knowledge.

- Rational Agent

-

An agent that, for each possible percept sequence, selects the action expected to maximize its performance measure given the evidence of the percept sequence and whatever built-in knowledge the agent has.

Three important nuances:

-

Rationality is relative to what the agent knows. An agent cannot be blamed for acting on incomplete information it had no way to obtain.

-

Rationality is not omniscience. A rational agent does the best it can with available information — it is not required to be perfect.

-

Rationality requires learning. An agent that can update its knowledge based on experience becomes more rational over time.

Consider a smoke detector. Is it an agent? What are its sensors and actuators? Is it rational?

Now consider what would make a smoke detector irrational — what design decisions could cause it to fail at its goal even when functioning as intended?

Test Your Understanding

Check your understanding of agent components and the agent function.

Based on the UC Berkeley CS 188 Online Textbook by Nikhil Sharma, Josh Hug, Jacky Liang, and Henry Zhu, licensed under CC BY-SA 4.0.

This work is licensed under CC BY-SA 4.0.