Agent Architectures

Unit 2: Intelligent Agents — Section 2.4

You know what an agent is, how to specify it with PEAS, and how to classify its environment. Now the central engineering question: how do you build the agent program?

The agent program is the internal mechanism that generates actions from percepts. Different problems call for different internal structures — different agent architectures. We examine four architectures in order of increasing sophistication.

From Section 2.3: environment properties determine which architecture is appropriate. A fully observable, deterministic environment can use a simple architecture. A partially observable, stochastic environment requires something more sophisticated. Keep the environment taxonomy in mind as you read each architecture below.

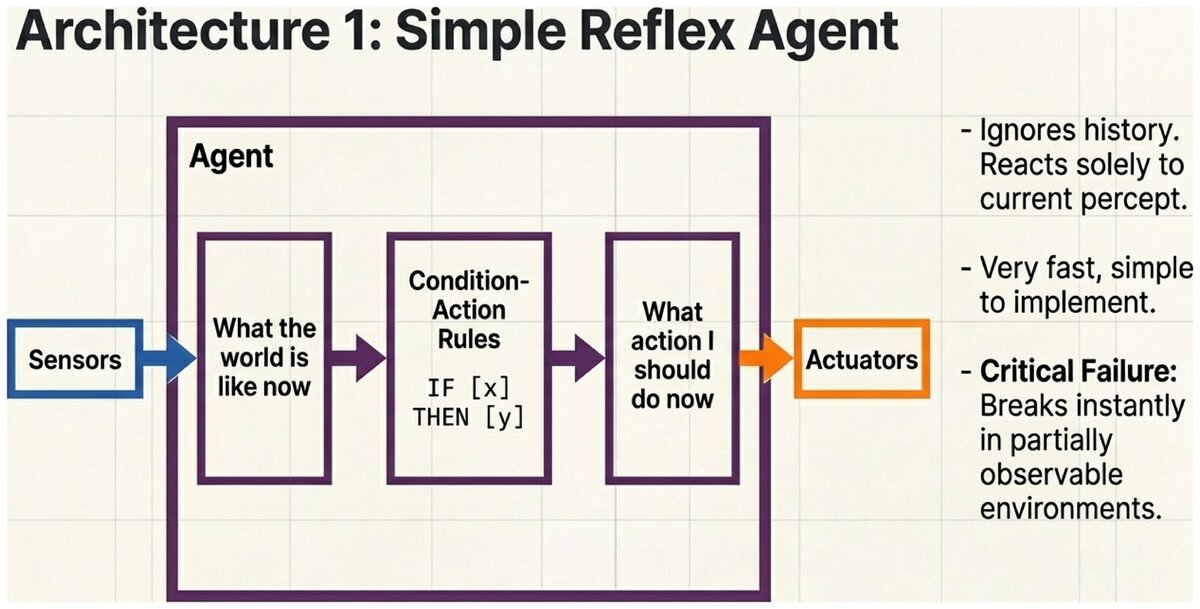

Architecture 1: Simple Reflex Agents

- Simple Reflex Agent

-

An agent that selects actions based solely on the current percept, ignoring all prior history. It implements a set of condition-action rules: "IF this situation is observed, THEN take this action."

A simple reflex agent works like a collection of if-then rules. When a percept arrives, the agent scans its rules, finds the first rule whose condition matches, and executes the corresponding action.

Automatic Sliding Door

Rules:

IF person approaches THEN open door IF no person detected THEN close door

The door does not remember whether it was open a minute ago — it reacts only to what its sensor tells it right now. This is exactly a simple reflex agent.

Advantages:

-

Very simple to implement and understand

-

Fast — no complex reasoning required

-

Works perfectly in fully observable, deterministic environments

Limitations:

-

Fails in partially observable environments (cannot account for what it cannot see)

-

No planning or anticipation of consequences

-

Can get stuck in infinite loops

-

Cannot handle novel situations not covered by its rules

Best suited for: Fully observable environments with simple, reactive tasks where speed is critical.

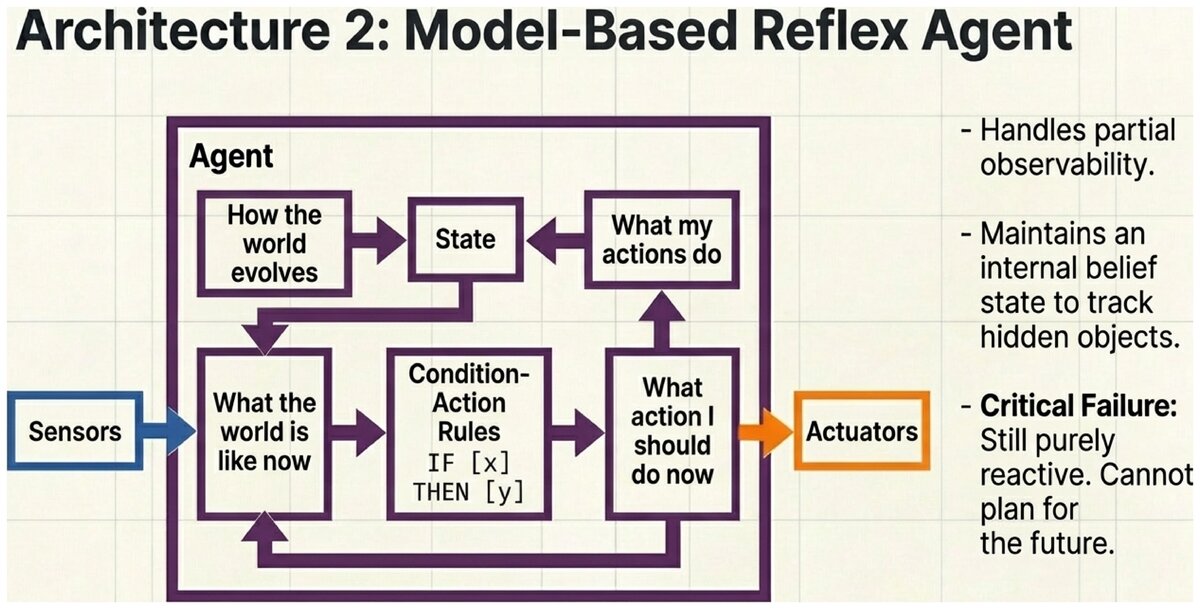

Architecture 2: Model-Based Reflex Agents

- Model-Based Reflex Agent

-

An agent that maintains an internal state — a representation of parts of the world that are not currently visible — to handle partial observability. The internal state is updated using a transition model (how the world changes) and a sensor model (how world states map to percepts).

When a car passes behind a truck and disappears from a camera’s view, a simple reflex agent treats it as if the car never existed. A model-based agent maintains a belief: "There is probably still a car there, moving at roughly the speed I last observed."

The agent updates this internal state on every time step using two models:

-

Transition model: How does the world change on its own (independent of my actions)?

-

Sensor model: Given the world’s true state, what percepts would I expect to receive?

Self-Driving Car Tracking Hidden Vehicles

Situation: A car passes behind a large delivery truck. The car is no longer visible to the cameras.

A simple reflex agent: ignores the car (it is not in the current percept).

A model-based agent: maintains internal belief that a car exists behind the truck, estimates its likely position and speed based on its last known trajectory, and adjusts driving behavior accordingly — leaving extra following distance, signaling cautiously.

The model-based agent’s internal state includes: {location: (behind truck), speed: ~55 mph, confidence: 0.85}.

Advantages:

-

Handles partial observability

-

Can track objects and states that are temporarily hidden

-

More robust than simple reflex agents

Limitations:

-

Still reactive at its core — uses rules, not planning

-

Requires an accurate model of how the world works

-

Uncertainty accumulates over time as the internal model diverges from reality

Best suited for: Partially observable environments where the agent can model world dynamics but does not need to plan sequences of actions.

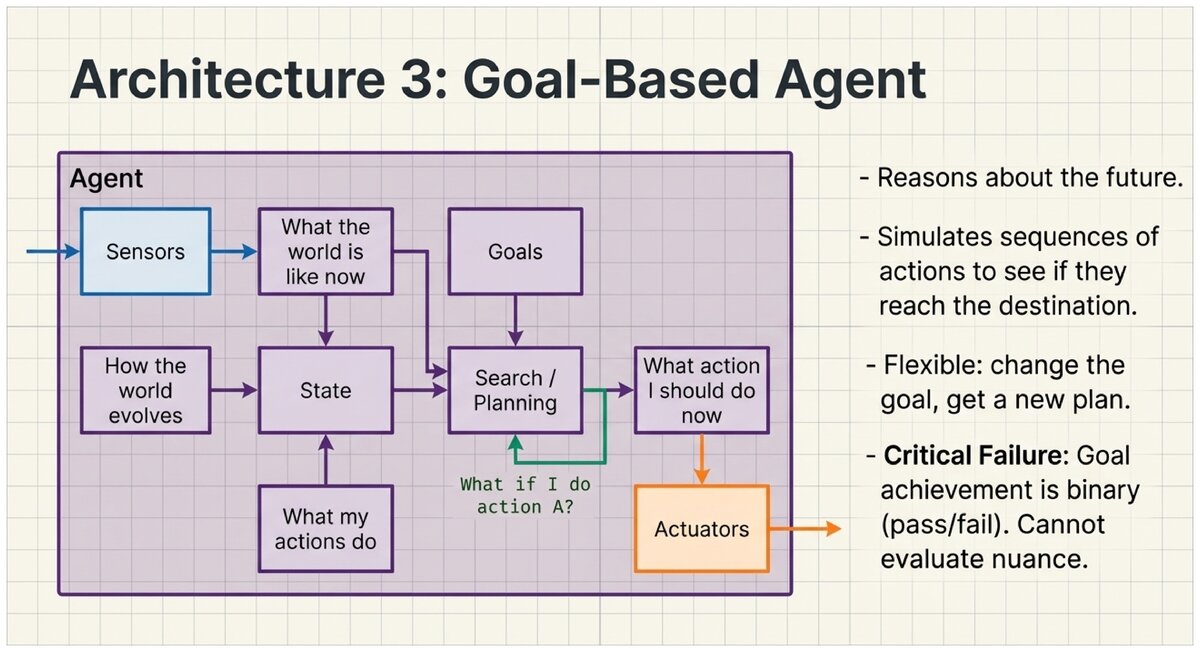

Architecture 3: Goal-Based Agents

- Goal-Based Agent

-

An agent that explicitly represents goals — descriptions of desirable states — and uses search or planning to find action sequences that achieve those goals. Rather than reactive rules, the agent reasons about what would happen if it took various sequences of actions.

The key difference from reflex agents: a goal-based agent reasons about the future. It asks "if I do X, then Y, then Z — will I reach my goal?" and chooses the action sequence with the best answer.

GPS Navigation

Goal: Reach "123 Main Street"

Process: . Current state: at the intersection of 1st Ave and Oak St . Search through possible routes: "Turn right and go 2 blocks" vs. "Turn left and take the highway" vs. … . Evaluate: which sequence of turns leads to the goal state? . Select and execute: "Turn right, go 2 blocks, turn left…"

The GPS plans a sequence of actions rather than reacting to each step one at a time. If a road is closed, it replans — it does not blindly follow pre-programmed rules.

Advantages:

-

Flexible — change the goal and the agent automatically computes a new plan

-

Can handle novel situations not explicitly anticipated at design time

-

Reasons about the long-term consequences of actions

-

One architecture can pursue many different goals

Limitations:

-

Computationally expensive compared to reflex agents

-

Requires an accurate model of how actions change the world

-

Goal achievement is binary: goal met or not met (no "degrees of success")

Best suited for: Sequential environments where the agent must plan ahead, especially when goals change frequently or the action space is too complex for hand-coded rules.

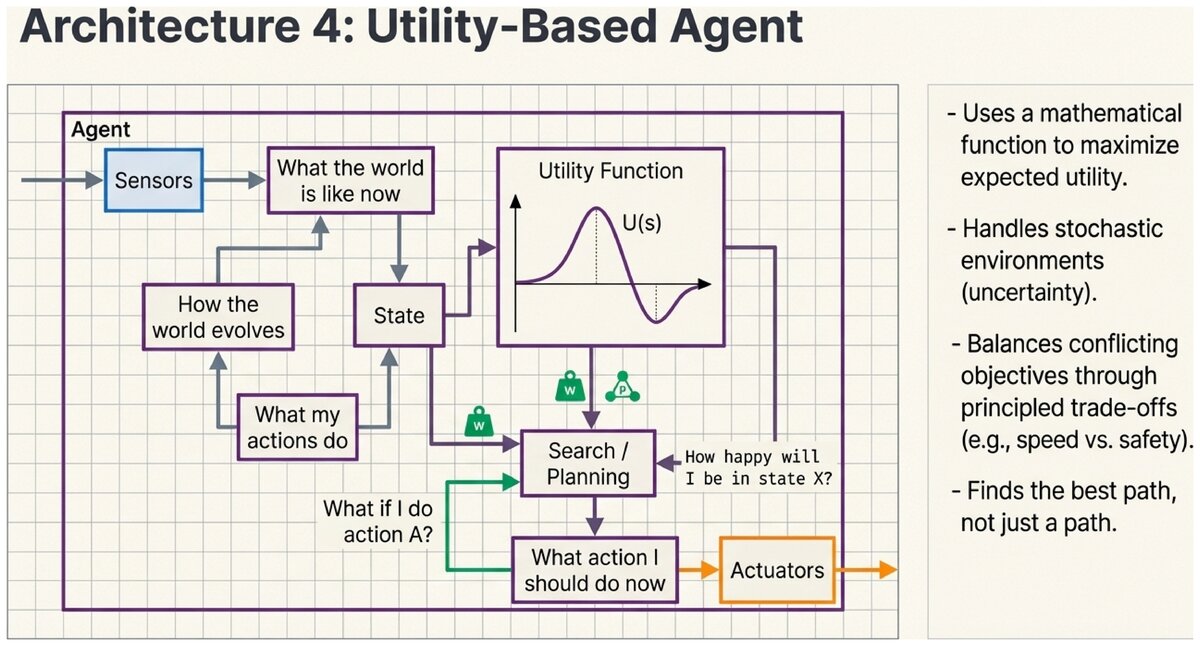

Architecture 4: Utility-Based Agents

- Utility-Based Agent

-

An agent that uses a utility function — a function that maps states (or sequences of states) to a numeric measure of desirability — and chooses actions that maximize expected utility. Utility provides a continuous, nuanced measure of success rather than binary goal achievement.

Goal-based agents treat success as binary: the goal is either achieved or it is not. But real problems often require trade-offs among multiple competing criteria. A utility function lets the agent balance these criteria numerically.

Self-Driving Taxi Route Choice

A goal-based agent with goal "reach destination" might take the fastest route regardless of other factors.

A utility-based agent evaluates routes on multiple criteria simultaneously:

| Route | Utility Score | Reasoning |

|---|---|---|

Highway (fast, risky construction zone) |

72 |

Speed: +40, Safety risk: -25, Fuel: +15, Comfort: +12, Traffic: +10 (net: weighted sum → 72) |

Surface streets (slower, smooth roads) |

85 |

Speed: +25, Safety: +30, Fuel: +12, Comfort: +18, Traffic: +0 (net: 85) |

Expressway (medium speed, tolls) |

78 |

Speed: +35, Safety: +25, Fuel: +8, Comfort: +15, Traffic: +5, Toll: -10 (net: 78) |

The agent selects the surface streets route (utility = 85) even though it is not the fastest option. The utility function captures the relative importance of safety, comfort, and efficiency in a single principled measure.

Advantages:

-

Handles conflicting objectives through principled trade-offs

-

Reasons rationally under uncertainty (stochastic environments)

-

Produces a continuous scale of "how good" — not just pass/fail

-

Provides a foundation for decision theory

Limitations:

-

Most computationally expensive architecture

-

Utility functions are difficult to specify correctly

-

Requires probability estimates for outcomes in stochastic environments

-

Misspecified utility functions can produce unintended behavior

Best suited for: Environments with multiple conflicting objectives, stochastic outcomes, or trade-offs between competing performance criteria.

Comparing the Four Architectures

| Architecture | Uses History? | Plans Ahead? | Handles Uncertainty? | Best Environments |

|---|---|---|---|---|

Simple Reflex |

No |

No |

No |

Fully observable, simple, reactive |

Model-Based Reflex |

Yes (internal state) |

No |

Limited |

Partially observable, reactive |

Goal-Based |

Yes |

Yes |

Limited |

Sequential, planning required |

Utility-Based |

Yes |

Yes |

Yes |

Trade-offs, stochastic outcomes |

Each architecture builds directly on the previous one:

-

Simple Reflex: Percept → Action (rules only)

-

Model-Based: Add internal state tracking to handle hidden information

-

Goal-Based: Add goals and planning to reason about the future

-

Utility-Based: Add a utility function to handle trade-offs and uncertainty

More sophisticated architecture = more powerful, but also more computationally expensive and harder to design. Choose the simplest architecture that handles your environment’s properties.

Matching Architecture to Environment

The environment taxonomy from Section 2.3 connects directly to architecture selection:

-

Fully observable + deterministic + static: A simple reflex agent may suffice.

-

Partially observable: You need at least a model-based agent with internal state.

-

Sequential: You need at least a goal-based agent that can plan ahead.

-

Stochastic with trade-offs: You need a utility-based agent.

For each scenario, identify the minimum architecture needed and explain your reasoning:

-

A robot that waters plants when a soil moisture sensor reads below a threshold

-

A navigation system for a hiking trail (static environment, user sets destination)

-

A stock trading algorithm that must balance risk, return, and liquidity

-

A rescue robot searching a damaged building for survivors

Think carefully about whether the environment is partially observable, whether sequences of decisions matter, and whether trade-offs are involved.

Test Your Understanding

Match agent architectures to the scenarios that require them.

Based on the UC Berkeley CS 188 Online Textbook by Nikhil Sharma, Josh Hug, Jacky Liang, and Henry Zhu, licensed under CC BY-SA 4.0.

This work is licensed under CC BY-SA 4.0.