Ethics and Societal Impact

Unit 1: Foundations of Artificial Intelligence — Section 1.5

AI promises remarkable benefits — earlier disease detection, safer roads, more productive science. But those same capabilities introduce serious risks: perpetuating historical discrimination, enabling mass surveillance, displacing workers, and concentrating power in the hands of a few organizations. As students entering the AI field, you will face decisions about what to build, for whom, and with what safeguards. This section provides the vocabulary and frameworks you will need for those decisions.

This Crash Course episode covers algorithmic bias and fairness, including the Microsoft Tay incident, predictive policing, and fairness measurement challenges.

The Benefits of AI

Before examining risks, it is worth grounding ourselves in what AI stands to offer.

Healthcare: Earlier disease detection through medical imaging analysis, drug discovery acceleration (AlphaFold solved the protein-folding problem), personalized treatment recommendations, and assistive technologies for people with disabilities.

Safety: Autonomous vehicles could substantially reduce traffic deaths — approximately 90% of vehicle accidents involve human error. AI systems can predict and help manage natural disasters, and can automate the most dangerous elements of jobs in mining, firefighting, and hazardous materials handling.

Scientific progress: AI accelerates research in genomics, climate modeling, materials science, and astrophysics. Problems that would have required decades of human analysis can be explored in months.

Accessibility and equity: Real-time translation breaks language barriers. AI tutors can provide personalized instruction regardless of a student’s location. Expert-level guidance in law, medicine, and finance could become accessible to people who cannot afford professional services.

Bias and Fairness

Bias is one of the most documented and consequential risks in deployed AI systems. An AI system exhibits algorithmic bias when it systematically treats groups of people differently in ways that are unjust or harmful.

Three documented cases of algorithmic bias:

Facial recognition accuracy gaps: Multiple studies — including the landmark "Gender Shades" audit by Joy Buolamwini and Timnit Gebru — found that commercial facial recognition systems misidentified women of color at error rates dramatically higher than for white men. One system had an error rate of less than 1% for light-skinned men and over 34% for dark-skinned women. Training datasets had been predominantly white and male.

COMPAS recidivism scoring: The COMPAS algorithm, used in some U.S. courts to assess the risk that a defendant will re-offend, was found by ProPublica (2016) to falsely flag Black defendants as high-risk at nearly twice the rate of white defendants — even when controlling for actual re-offense rates.

Resume screening: Amazon abandoned an AI recruiting tool in 2018 after discovering it systematically downgraded resumes that included the word "women’s" (as in "women’s chess club") because it had been trained on a decade of resumes submitted to the company, which historically came predominantly from men.

Why does bias occur?

-

Training data reflects historical inequities in society.

-

Dataset creators have blind spots about whose perspectives are represented.

-

Optimizing for overall accuracy can mask poor performance on minority subgroups.

-

Proxy variables — seemingly neutral data points like ZIP code — can correlate strongly with protected characteristics like race.

- Algorithmic Bias

-

Systematic and unfair discrimination produced by an AI system, arising from non-representative training data, biased problem formulations, or optimization criteria that perform inequitably across demographic groups. Bias is not always intentional — it can emerge even when developers actively try to avoid it.

- Proxy Variable

-

A variable that appears neutral but correlates strongly with a protected characteristic such as race, gender, or religion. For example, using ZIP code as a predictor may introduce racial bias because residential segregation makes ZIP code a proxy for race in many U.S. cities.

- Fairness (Algorithmic)

-

The principle that an AI system’s outputs should be equitable across demographic groups. Multiple mathematical definitions of fairness exist and are often mutually incompatible — a fundamental challenge in fair machine learning.

Privacy and Surveillance

AI enables surveillance capabilities that would have been technically and economically impossible a decade ago.

Facial recognition systems can identify individuals moving through public spaces at scale, building movement histories without the subject’s knowledge or consent. Social media analysis can infer political beliefs, health conditions, and relationships from patterns of behavior that users may not realize they are revealing. Behavioral prediction from digital footprints can be used to target advertising, adjust insurance premiums, or assess creditworthiness.

These capabilities are not inherently harmful — contact tracing during a pandemic or identifying missing children both represent legitimate uses. But the same infrastructure can be used for political repression, discrimination, and social control. The challenge is designing governance frameworks that permit beneficial uses while prohibiting harmful ones.

Employment Displacement

Automation has always disrupted labor markets — the industrial revolution eliminated millions of agricultural and craft jobs while creating others. AI may accelerate and broaden that disruption.

Jobs most immediately at risk include those involving routine cognitive work: data entry, basic customer service, paralegal research, radiological image interpretation, and some aspects of accounting. Physical jobs involving variable, unstructured environments (plumber, electrician, surgeon) are less immediately threatened.

The equity dimension: The workers most likely to be displaced by AI tend to be in lower-wage jobs with less access to retraining. The workers and investors who benefit most from AI tend to be in higher-wage positions. If not addressed through policy, AI-driven automation could substantially increase economic inequality.

New jobs will emerge alongside the automation of existing ones — this has been true in every previous wave of automation. But "new jobs will emerge" is a cold comfort to a 50-year-old truck driver whose livelihood is eliminated and who cannot easily retrain.

What obligations do companies deploying AI systems have toward workers whose jobs are automated? What role should government play? Who should benefit from productivity gains driven by AI?

Autonomous Weapons

AI-powered weapons that can select and engage targets without human authorization represent a category of risk that many AI researchers consider among the most urgent.

Concerns include:

-

Lowered barriers to conflict: If attacks require no human soldiers at risk, the political cost of initiating conflict decreases.

-

Accountability gaps: When an autonomous weapon makes a decision that kills civilians, who is responsible — the developer, the operator, the military commander?

-

Arms races: Multiple states are investing in autonomous weapons systems, creating pressures to deploy before safety and accountability frameworks exist.

Over 3,000 AI researchers and technologists signed an open letter pledging not to work on autonomous lethal weapons.

Misinformation and Manipulation

Generative AI dramatically lowers the cost of producing persuasive false content.

-

Deepfakes — AI-synthesized videos that place real people in fabricated situations — are increasingly difficult to detect.

-

Large language models can generate thousands of unique, plausible-sounding social media posts promoting a viewpoint with minimal human effort.

-

Personalized persuasion — targeting individuals with messages tailored to their specific psychological profiles — can exploit individual vulnerabilities at scale.

These capabilities threaten the epistemic foundations of democratic societies: if citizens cannot reliably determine what is real, informed political participation becomes impossible.

AI Governance: The EU AI Act

The European Union Artificial Intelligence Act (Regulation 2024/1689), which entered into force in 2024, is the world’s first comprehensive legal framework specifically regulating AI. It takes a risk-based approach: different requirements apply depending on how much risk an AI system poses.

The EU AI Act’s four risk tiers:

Unacceptable Risk (Prohibited): AI systems in this category are banned outright. Examples include: social scoring systems that rank citizens based on behavior; AI that exploits psychological vulnerabilities to manipulate behavior; real-time remote biometric identification in public spaces for law enforcement (with narrow exceptions); and predictive crime risk profiling based solely on personality traits.

High Risk (Strict Requirements): AI systems used in critical infrastructure, medical devices, employment decisions, credit scoring, criminal justice, and education must meet mandatory requirements including risk management systems, data quality standards, human oversight mechanisms, transparency documentation, and third-party conformity assessment before deployment.

Limited Risk (Transparency Obligations): Chatbots and emotion recognition systems must disclose that users are interacting with an AI. Synthetic content (deepfakes) must be labeled.

Minimal Risk (No Specific Requirements): AI in video games, spam filters, and similar low-stakes applications faces no specific EU AI Act obligations, though general product safety law still applies.

- Risk-Based Regulation

-

A regulatory approach that scales requirements according to the severity of potential harm. The EU AI Act applies strict requirements only to high-risk AI applications, allowing low-risk innovation to proceed with minimal overhead while protecting against the most serious harms.

- EU AI Act

-

European Union Regulation 2024/1689, the world’s first comprehensive AI-specific law. It establishes a risk-based framework prohibiting certain AI uses, imposing strict requirements on high-risk AI applications, and requiring transparency for certain lower-risk systems.

Responsible AI Development

The question is not whether to use AI, but how to use it responsibly.

Technical approaches include:

-

Fairness-aware machine learning — explicitly optimizing for equitable performance across demographic groups

-

Explainable AI (XAI) — designing systems whose decision processes can be understood and audited

-

Differential privacy — adding calibrated noise to training data to protect individual records while preserving statistical utility

-

Robustness testing — systematically evaluating system behavior on edge cases, distribution shifts, and adversarial inputs

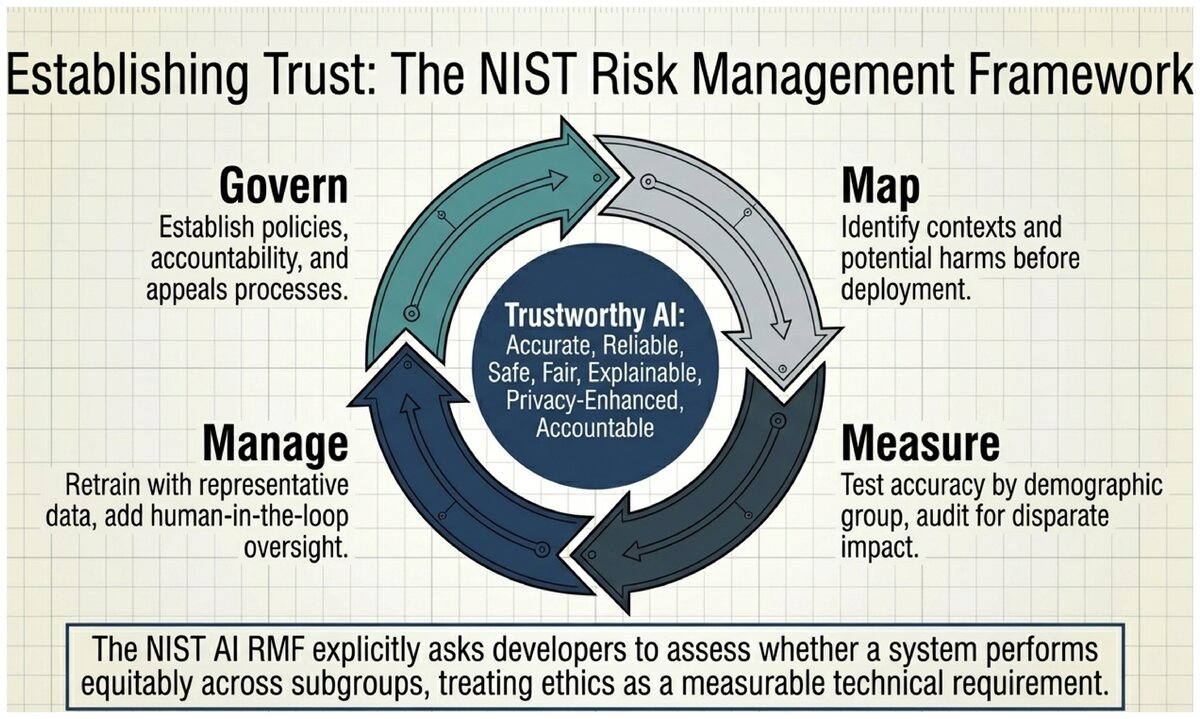

Governance approaches include:

-

Impact assessments — requiring organizations to evaluate potential harms before deploying AI in high-stakes contexts

-

Algorithmic auditing — independent third-party evaluation of deployed systems for bias, accuracy, and compliance

-

Transparency requirements — mandatory disclosure when consequential decisions are made with AI assistance

-

Human oversight — requiring a human in the loop for decisions that significantly affect individuals

Further reading on AI ethics:

NIST AI Risk Management Framework (AI RMF 1.0) — Comprehensive voluntary framework for trustworthy AI; U.S. public domain.

Montreal AI Ethics Institute — Independent research on AI ethics; publishes the State of AI Ethics annual report and an AI Ethics Living Dictionary, both CC BY 4.0.

EU AI Act (EUR-Lex, CC BY 4.0) — Full text of Regulation 2024/1689 at eur-lex.europa.eu/legal-content/EN/TXT/?uri=CELEX:32024R1689

Ethics in AI is not a constraint imposed from outside — it is part of building systems that actually work as intended in the real world. Biased systems produce wrong answers for some users. Opaque systems erode trust. Unsecured systems create liabilities. An AI system that is accurate, fair, explainable, and accountable is simply a better system.

The field needs engineers and scientists who treat ethics as a technical requirement, not an afterthought.

Which of the AI risks discussed in this section concerns you most, and why? Which potential benefit excites you most?

Now consider your personal answer to: if you were building an AI system for use in a consequential domain (hiring, healthcare, criminal justice), what safeguards would you consider non-negotiable?

There is no single right answer — but thinking through your position now will help you navigate real decisions later in your career.

Test your understanding of AI ethics, bias, and governance frameworks.

You have now completed the five content sections of Unit 1. The next page is the hands-on lab assignment: you will apply this week’s concepts by analyzing real AI systems in the wild.

AI governance content incorporates material from the NIST AI Risk Management Framework (AI RMF 1.0), a U.S. Government work in the public domain.

Ethics content adapted from the Montreal AI Ethics Institute, licensed under CC BY 4.0.

Original content for CSC 114: Artificial Intelligence I, Central Piedmont Community College.

This work is licensed under CC BY-SA 4.0.