Classifying Environments

Unit 2: Intelligent Agents — Section 2.3

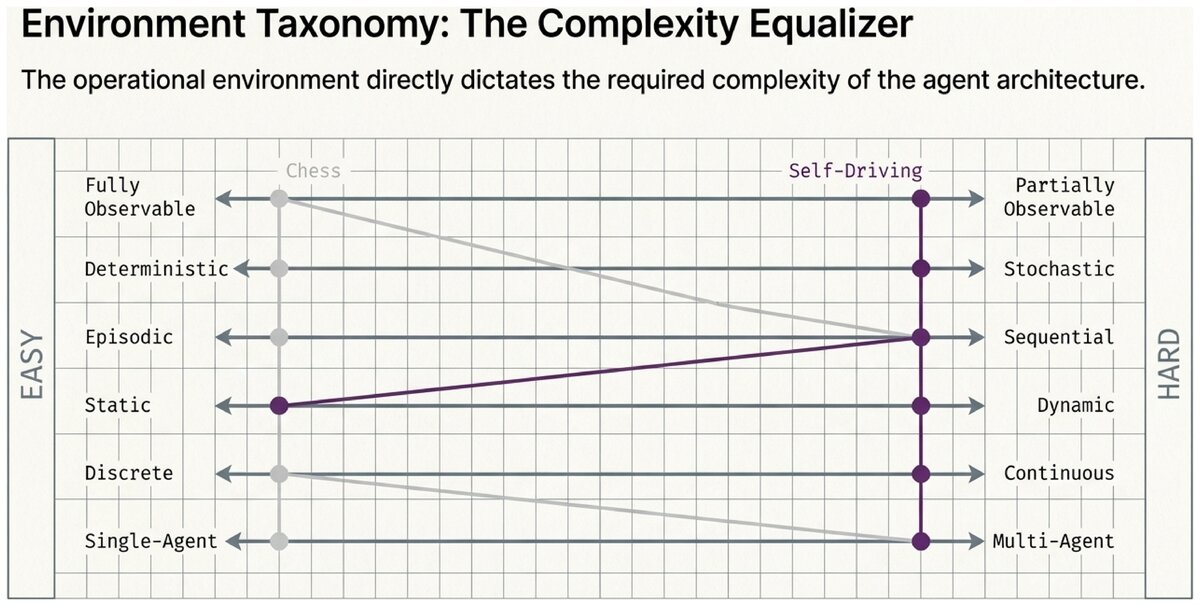

Chess and self-driving are both "environments," but a chess-playing AI and a self-driving car are fundamentally different problems. In chess, you see the entire board, moves have predictable results, and your opponent waits for your turn. On the road, other drivers are hidden around corners, pedestrians are unpredictable, and the world keeps moving while you think.

These differences are not just details — they determine which agent architectures work and which fail. Classifying environments along six key dimensions gives you the analytical tools to make those distinctions precisely.

Six Dimensions of Environment Classification

1. Fully Observable vs. Partially Observable

- Fully Observable Environment

-

An environment in which the agent’s sensors give it complete access to the state of the environment at every time step. The agent always knows exactly what situation it is in.

- Partially Observable Environment

-

An environment in which the agent’s sensors provide incomplete, noisy, or limited information about the environment’s state. Parts of the world are hidden from the agent at any given moment.

Fully observable examples: Chess (entire board is visible), crossword puzzles, tic-tac-toe.

Partially observable examples: Driving (cars are hidden around corners), poker (opponent’s cards are hidden), medical diagnosis (internal organ state requires tests to observe).

Design impact: In a fully observable environment, an agent can make decisions based solely on current percepts. In a partially observable environment, the agent must maintain internal beliefs about the unobserved parts of the world — this is significantly more complex.

2. Deterministic vs. Stochastic

- Deterministic Environment

-

An environment in which the next state is completely determined by the current state and the agent’s action. There is no randomness — the same action in the same situation always produces the same result.

- Stochastic Environment

-

An environment that contains randomness or uncertainty. The same action in the same state can produce different outcomes on different occasions.

Deterministic examples: Chess (a move always produces the same board position), 8-puzzle, Sudoku.

Stochastic examples: Backgammon (dice rolls), driving (unpredictable pedestrians and drivers), stock market, weather.

Design impact: In a deterministic environment, an agent can predict exactly what will happen and plan with certainty. In a stochastic environment, the agent must reason about probabilities and be prepared for unexpected outcomes.

|

An environment that appears stochastic but is actually deterministic from a more complete description is sometimes called strategic (when the uncertainty comes from other agents' unpredictable choices) or uncertain (when it comes from hidden physics). |

3. Episodic vs. Sequential

- Episodic Environment

-

An environment in which the agent’s experience is divided into independent episodes. Each episode (perceive + act) does not affect future episodes.

- Sequential Environment

-

An environment in which current decisions affect future situations. The agent must consider long-term consequences when choosing actions.

Episodic examples: Assembly-line defect detection (each item is independent), image classification (each image is separate), spam filtering (mostly — each email is classified independently).

Sequential examples: Chess (early moves affect the endgame), self-driving (current lane choice affects future options), medical treatment (decisions have lasting effects on patient health).

Design impact: Episodic agents do not need to plan ahead — each decision stands alone. Sequential agents must consider how today’s actions constrain or enable future options, which requires search and planning.

4. Static vs. Dynamic

- Static Environment

-

An environment that does not change while the agent is deliberating. The world waits for the agent to make a decision.

- Dynamic Environment

-

An environment that changes while the agent is thinking. Time passes and the world moves on regardless of whether the agent has acted yet.

- Semi-dynamic Environment

-

An environment that does not change while the agent deliberates, but the agent’s performance score changes with elapsed time.

Static examples: Chess (the board does not change during your turn), crossword puzzles, offline image processing.

Dynamic examples: Self-driving cars (traffic keeps moving), real-time strategy games, emergency response systems.

Semi-dynamic example: Chess with a clock (the board is static, but running out of time costs you the game).

Design impact: Static environments allow the agent to take as long as it needs to deliberate. Dynamic environments require fast decision-making — the cost of thinking too long may be as bad as thinking wrong.

5. Discrete vs. Continuous

- Discrete Environment

-

An environment with a finite number of distinct states, percepts, and actions. Possibilities can be enumerated.

- Continuous Environment

-

An environment where states and/or actions vary along continuous scales. There are infinitely many possibilities within any range.

Discrete examples: Chess (finite board positions, finite legal moves), tic-tac-toe, text classification.

Continuous examples: Self-driving cars (position, velocity, and steering angle vary continuously), robot arm control (joint angles), thermostat (temperature).

Design impact: Discrete environments allow the agent to enumerate and compare all possibilities. Continuous environments require approximation, sampling, or optimization over infinite spaces.

6. Single-Agent vs. Multi-Agent

- Single-Agent Environment

-

An environment containing only one agent. No other agents are present whose behavior the agent must model or respond to.

- Multi-Agent Environment

-

An environment containing two or more agents whose actions can interact. Agents may be competitive (each agent’s gain is another’s loss) or cooperative (agents share goals and coordinate).

Single-agent examples: Solving a crossword puzzle alone, navigating a maze with no other robots.

Multi-agent examples: Chess (competitive — one player wins, one loses), self-driving cars (semi-competitive for road space, cooperative for collision avoidance), multiplayer games, negotiation systems.

Design impact: In a single-agent environment, the agent only needs to optimize its own behavior. In a multi-agent environment, the agent must reason about what other agents will do — which may require game theory, opponent modeling, or communication protocols.

Environment Classification Summary Table

| Environment | Dimension | Classification | Key Design Challenge |

|---|---|---|---|

Chess |

Observable |

Fully Observable |

None — full information |

Chess |

Deterministic |

Deterministic |

No uncertainty in outcomes |

Chess |

Episodic |

Sequential |

Early moves affect endgame |

Chess |

Static |

Semi-dynamic |

Clock pressure, but board is static |

Chess |

Discrete |

Discrete |

Can enumerate moves |

Chess |

Agents |

Multi-Agent (competitive) |

Must model opponent |

Self-Driving Taxi |

Observable |

Partially Observable |

Must track hidden objects |

Self-Driving Taxi |

Deterministic |

Stochastic |

Other drivers are unpredictable |

Self-Driving Taxi |

Episodic |

Sequential |

Lane choice has lasting consequences |

Self-Driving Taxi |

Static |

Dynamic |

World moves while deciding |

Self-Driving Taxi |

Discrete |

Continuous |

Position/speed vary smoothly |

Self-Driving Taxi |

Agents |

Multi-Agent (mixed) |

Coordinate with other vehicles |

The harder properties on each dimension create more demanding design challenges:

-

Partial observability → agent needs memory and state estimation

-

Stochastic → agent needs probabilistic reasoning

-

Sequential → agent needs planning and lookahead

-

Dynamic → agent needs real-time decision making

-

Continuous → agent needs approximation methods

-

Multi-agent → agent needs game theory and opponent modeling

The simplest possible environment — fully observable, deterministic, episodic, static, discrete, single-agent — can use the simplest possible agent design. Every step toward the "harder" side demands a more sophisticated architecture.

Why Games Were Solved Before Robotics

It may seem surprising that AI mastered chess in 1997 and Go in 2016, but self-driving cars are still not fully deployed at scale in 2026. The environment taxonomy explains why:

Chess: Fully observable, deterministic, sequential, semi-dynamic, discrete, two-agent (competitive). The only challenging properties are "sequential" and "competitive" — both well-handled by search and game tree algorithms.

Self-driving: Partially observable, stochastic, sequential, dynamic, continuous, multi-agent (mixed). Every single dimension is on the hard side. Each one requires a separate engineering solution, and they all interact.

This is not a failure of AI research — it is a consequence of the environment’s inherent complexity.

Classify the following environments along all six dimensions, and explain which dimension creates the greatest design challenge for an agent in that environment:

-

A robot that sorts recyclables on a conveyor belt

-

A chatbot customer service agent for an airline

-

A poker-playing AI

For the chatbot, pay particular attention to observability — what aspects of a customer’s intent or emotional state are not visible to the agent?

Test Your Understanding

Classify environments and identify the design implications of each property.

Based on the UC Berkeley CS 188 Online Textbook by Nikhil Sharma, Josh Hug, Jacky Liang, and Henry Zhu, licensed under CC BY-SA 4.0.

This work is licensed under CC BY-SA 4.0.