Forward Chaining

Unit 6: Knowledge-Based Agents and Inference — Section 6.3

Imagine a smoke alarm system. When sensors detect smoke and elevated temperature, the alarm fires. The system does not wait for someone to ask "is there a fire?" — it continuously scans its inputs and triggers actions the moment conditions are met. This proactive, data-first style of reasoning is exactly what forward chaining does.

See forward and backward chaining algorithms traced through examples.

What Is Forward Chaining?

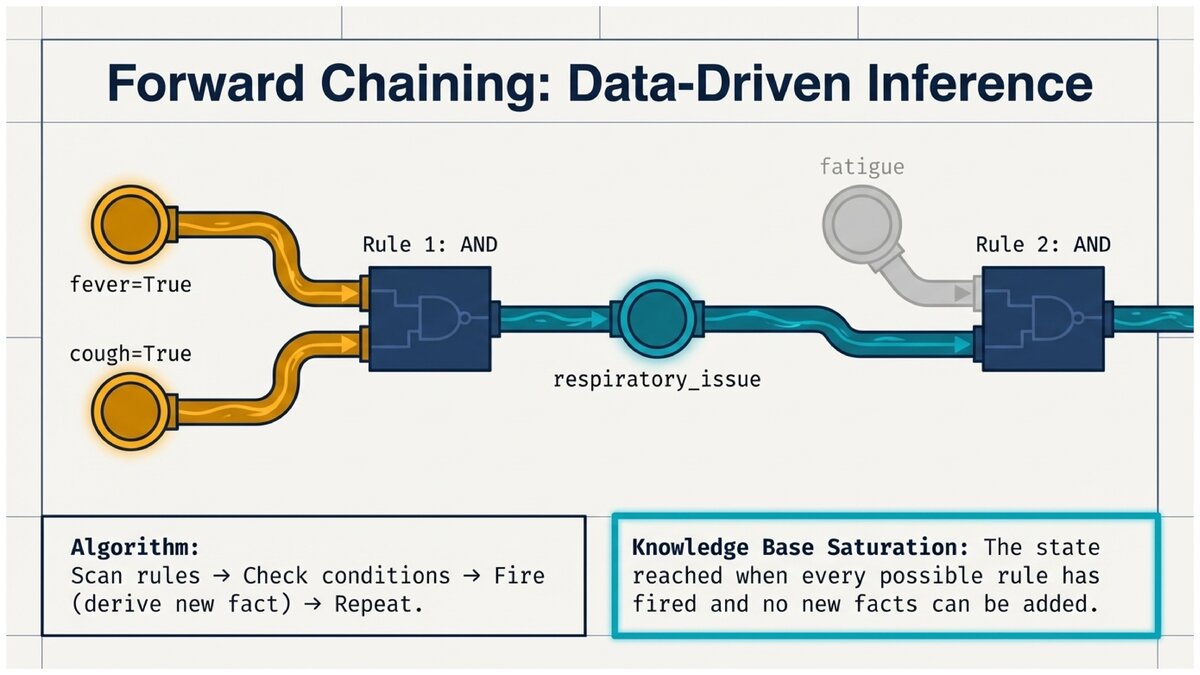

Forward chaining is a data-driven inference strategy. The agent starts with the facts currently in its knowledge base, systematically applies rules whose conditions are satisfied, and keeps adding new facts until no more rules can fire. At the end of this process, the KB contains every fact that can logically be derived from the initial information.

- Forward Chaining

-

An inference algorithm that begins with known facts and applies rules in the forward direction (antecedent → consequent) until no new facts can be derived. Also called data-driven reasoning because the data (facts) drive the process.

- Knowledge Base Saturation

-

The state reached when forward chaining has fired every rule that can fire and no new facts can be added. The saturated KB contains the complete set of facts entailed by the original KB.

The Forward Chaining Algorithm

Forward Chaining Algorithm

-

Initialize: Start with all known facts in the KB.

-

Scan: Examine every rule in the KB.

-

Check: Is this rule’s condition (antecedent) fully satisfied by the current set of known facts?

-

Fire: If yes, and if the conclusion is not already known, add the conclusion to the KB.

-

Repeat: Return to step 2.

-

Stop: When one complete pass through all rules produces no new facts, the KB is saturated.

The algorithm always terminates because: (a) the KB contains a finite number of possible facts, and (b) once a fact is added it is never removed, so the set of known facts can only grow.

Tracing Forward Chaining: A Complete Example

Let us trace forward chaining through a medical knowledge base. This is the same example the expert system lab will use.

Medical Diagnosis via Forward Chaining

Initial facts:

fever = TRUE cough = TRUE fatigue = TRUE body_aches = TRUE

Rules in the KB:

R1: fever ∧ cough → respiratory_issue R2: respiratory_issue ∧ fatigue → possible_flu R3: possible_flu ∧ body_aches → flu R4: flu → recommend_rest R5: flu → recommend_fluids R6: flu → consider_antiviral

Iteration 1 — scan all rules:

R1: fever=TRUE ∧ cough=TRUE → FIRE R1

Added: respiratory_issue = TRUE

R2: respiratory_issue=FALSE (not yet) skip

R3: possible_flu=FALSE (not yet) skip

R4: flu=FALSE (not yet) skip

R5: flu=FALSE (not yet) skip

R6: flu=FALSE (not yet) skip

New facts this iteration: {respiratory_issue}

Known facts: {fever, cough, fatigue, body_aches, respiratory_issue}

Iteration 2:

R2: respiratory_issue=TRUE ∧ fatigue=TRUE → FIRE R2

Added: possible_flu = TRUE

New facts: {possible_flu}

Known facts: {fever, cough, fatigue, body_aches, respiratory_issue, possible_flu}

Iteration 3:

R3: possible_flu=TRUE ∧ body_aches=TRUE → FIRE R3

Added: flu = TRUE

New facts: {flu}

Iteration 4:

R4: flu=TRUE → FIRE R4 Added: recommend_rest

R5: flu=TRUE → FIRE R5 Added: recommend_fluids

R6: flu=TRUE → FIRE R6 Added: consider_antiviral

New facts: {recommend_rest, recommend_fluids, consider_antiviral}

Iteration 5:

All rules already fired or conditions not met. No new facts derived → STOP.

Final inferred facts: {respiratory_issue, possible_flu, flu, recommend_rest, recommend_fluids, consider_antiviral}

Every fact in this set is a logical consequence of the initial facts and rules. None of them were told to the KB explicitly.

Why This Works: Correctness of Forward Chaining

Forward chaining is sound: every fact it derives is genuinely entailed by the KB. This follows directly from Modus Ponens — the only rule it applies. Since Modus Ponens is sound (if premises are true, conclusion must be true), every new fact added is guaranteed to be true given the initial facts.

For knowledge bases consisting of Horn clauses (covered in Section 6.5), forward chaining is also complete: it finds every fact that can be derived.

This means after saturation, ASK(KB, X) returns TRUE if and only if X genuinely follows from the original KB.

Forward chaining on Horn clause knowledge bases is both:

-

Sound — it never derives a false fact.

-

Complete — it derives every fact that is logically entailed.

-

Decidable — it always terminates with an answer.

Data Flow: A Visual Mental Model

Think of forward chaining as water flowing downhill through a pipe network:

-

Facts are water sources.

-

Rules are junctions: water only flows through when all input pipes have water.

-

New facts are reservoirs that, once filled, become new sources for downstream rules.

-

Saturation is reached when every reservoir that can fill has filled.

The medical example above has a clear chain: fever + cough → respiratory_issue → (with fatigue) possible_flu → (with body_aches) flu → recommendations.

Each stage fills a reservoir that enables the next.

When to Use Forward Chaining

Forward chaining is the right strategy when:

-

You have a set of facts and want to know all consequences.

-

The system is reactive — it acts when conditions are met, not when asked.

-

Many different conclusions might be needed from the same set of facts.

-

New facts arrive continuously and the system must respond in real time.

Classic use cases:

-

Production systems (OPS5, CLIPS): industrial process control, smart home automation.

-

Monitoring systems: network intrusion detection, medical alert systems.

-

Business rule engines: loan approval, fraud detection, order fulfillment.

-

Expert system labs: like the one you will complete this week.

A More Complex Example: Computer Troubleshooting

Computer Diagnosis KB (condensed)

Initial facts: power_button_pressed = TRUE, lights_on = FALSE

Rules:

R1: power_button_pressed ∧ ¬lights_on → power_supply_dead R2: power_supply_dead → check_power_cable R3: power_supply_dead → check_wall_outlet R4: power_supply_dead → replace_power_supply

Forward chaining trace:

Iteration 1: R1: power_button_pressed ∧ ¬lights_on → FIRE Added: power_supply_dead Iteration 2: R2: power_supply_dead → FIRE → Added: check_power_cable R3: power_supply_dead → FIRE → Added: check_wall_outlet R4: power_supply_dead → FIRE → Added: replace_power_supply Iteration 3: No new facts. STOP.

The system has produced a ranked diagnostic checklist entirely from two observed symptoms.

Consider a smart home system that uses forward chaining. The KB contains rules like:

motion_detected ∧ after_sunset → turn_on_lights door_unlocked ∧ nobody_home → send_security_alert temperature < 65 ∧ thermostat_auto → activate_heating

What facts would need to be present for all three rules to fire simultaneously? What could go wrong if the sensor data is incorrect? How does this connect to the challenge of handling uncertainty in real-world AI systems?

Trace forward chaining through a small knowledge base.

Based on the UC Berkeley CS 188 Online Textbook by Nikhil Sharma, Josh Hug, Jacky Liang, and Henry Zhu, licensed under CC BY-SA 4.0.

This work is licensed under CC BY-SA 4.0.