Backward Chaining

Unit 6: Knowledge-Based Agents and Inference — Section 6.4

A doctor does not sit in an exam room and randomly derive every possible medical fact about a patient before deciding anything. Instead, the doctor focuses on a specific question — "Does this patient have appendicitis?" — and works backward: what symptoms would confirm or rule out that diagnosis? What tests would be informative? This targeted, question-first style of reasoning is backward chaining.

In the previous section, forward chaining started with known facts and derived all possible conclusions. Backward chaining reverses this: start with the conclusion you want to prove and work backward to find the facts that would support it. Both algorithms use Modus Ponens, but they apply it in opposite directions.

What Is Backward Chaining?

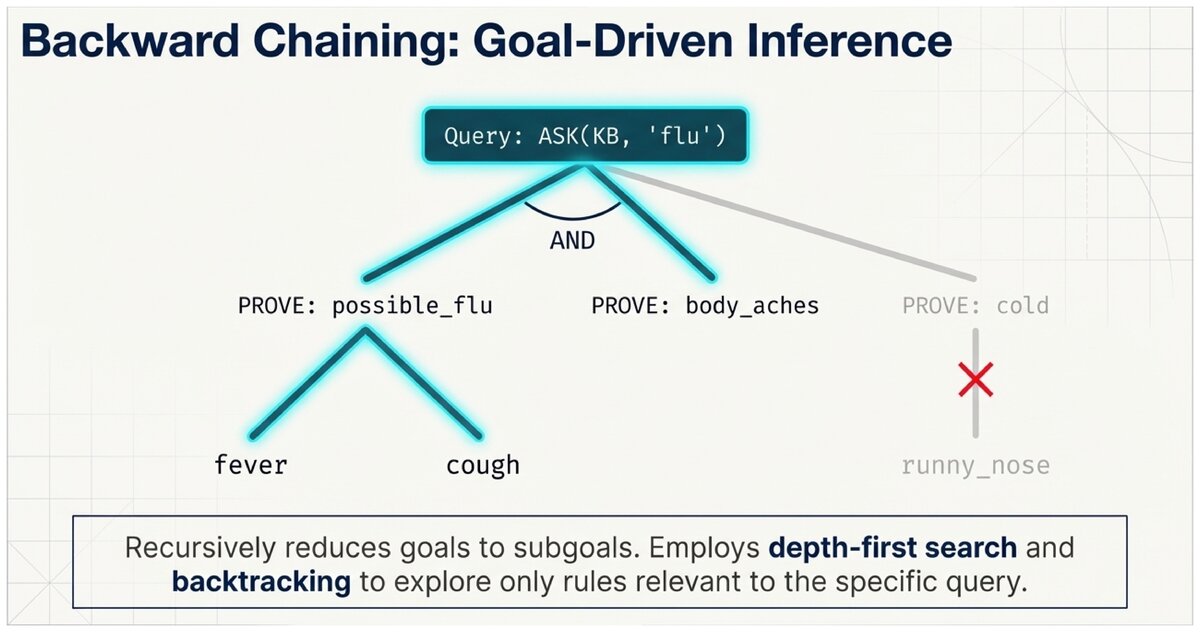

Backward chaining is a goal-driven inference strategy. The agent starts with a goal (the query it wants to prove) and searches for rules that could establish that goal. For each rule found, its conditions become new subgoals to prove. The process recurses until either all subgoals are established by known facts, or no path to the goal can be found.

- Backward Chaining

-

An inference algorithm that begins with a goal and works backward through rules to find supporting facts. Also called goal-driven reasoning because the goal drives the search.

- Subgoal

-

A condition that must be proven in order to establish a higher-level goal. Backward chaining recursively reduces goals to subgoals until they are either directly known facts or proven through their own subgoals.

The Backward Chaining Algorithm

Backward Chaining Algorithm

-

Goal: Take the query you want to prove as the current goal.

-

Check facts: Is the goal already a known fact in the KB? If yes, return SUCCESS.

-

Find rules: Are there any rules in the KB whose conclusion matches the current goal?

-

If no rules: Return FAILURE — the goal cannot be proven.

-

Choose rule: Select a rule whose conclusion matches the goal.

-

New subgoals: Each condition in that rule’s antecedent becomes a new subgoal.

-

Recurse: Prove each subgoal using this same algorithm.

-

Backtrack: If one path fails, try another rule (depth-first search with backtracking).

-

Success: If all subgoals on some path succeed, the original goal is proven.

The key efficiency insight: backward chaining only explores facts and rules that are relevant to the goal. If you ask "Does this patient have flu?" the algorithm never derives unrelated facts like "recommend_antihistamine." Forward chaining would derive everything; backward chaining focuses only on what you asked.

Tracing Backward Chaining: Complete Example

Let us use the same medical knowledge base from Section 6.3 to compare directly.

Medical Diagnosis via Backward Chaining

Known facts:

fever = TRUE cough = TRUE fatigue = TRUE body_aches = TRUE

Rules:

R1: fever ∧ cough → respiratory_issue R2: respiratory_issue ∧ fatigue → possible_flu R3: possible_flu ∧ body_aches → flu R4: flu → recommend_rest R5: flu → recommend_fluids

Query: ASK(KB, "recommend_rest")

Step 1 — Goal: recommend_rest

Is "recommend_rest" a known fact? No. Find rule with conclusion "recommend_rest": R4 (flu → recommend_rest) New subgoal: PROVE "flu"

Step 2 — Subgoal: flu

Is "flu" a known fact? No. Find rule with conclusion "flu": R3 (possible_flu ∧ body_aches → flu) New subgoals: PROVE "possible_flu" AND PROVE "body_aches"

Step 3 — Subgoal: body_aches

Is "body_aches" a known fact? YES ✓ Subgoal satisfied.

Step 4 — Subgoal: possible_flu

Is "possible_flu" a known fact? No. Find rule with conclusion "possible_flu": R2 (respiratory_issue ∧ fatigue → possible_flu) New subgoals: PROVE "respiratory_issue" AND PROVE "fatigue"

Step 5 — Subgoal: fatigue

Is "fatigue" a known fact? YES ✓ Subgoal satisfied.

Step 6 — Subgoal: respiratory_issue

Is "respiratory_issue" a known fact? No. Find rule with conclusion "respiratory_issue": R1 (fever ∧ cough → respiratory_issue) New subgoals: PROVE "fever" AND PROVE "cough"

Step 7 — Subgoal: fever and cough

Is "fever" a known fact? YES ✓ Is "cough" a known fact? YES ✓ Both subgoals satisfied.

Back-propagating success:

fever ∧ cough proven → respiratory_issue proven ✓ (R1) respiratory_issue ∧ fatigue proven → possible_flu proven ✓ (R2) possible_flu ∧ body_aches proven → flu proven ✓ (R3) flu proven → recommend_rest proven ✓ (R4)

Final answer: recommend_rest = TRUE

Crucially, the algorithm never computed recommend_fluids or consider_antiviral — those facts were irrelevant to the specific query.

The Proof Tree

Backward chaining produces a proof tree — a hierarchical record of how the goal was established. Reading the tree from leaves to root gives you the complete justification for the conclusion:

recommend_rest (proved by R4)

└── flu (proved by R3)

├── possible_flu (proved by R2)

│ ├── respiratory_issue (proved by R1)

│ │ ├── fever [known fact]

│ │ └── cough [known fact]

│ └── fatigue [known fact]

└── body_aches [known fact]This tree is the explanation for the conclusion. Expert systems that implement backward chaining can display this tree to users, allowing them to see exactly why the system reached a conclusion. This is one of the key advantages of logic-based AI over black-box machine learning.

Prolog: Backward Chaining in Practice

The programming language Prolog (Programming in Logic) uses backward chaining as its core execution strategy. Every Prolog rule is a Horn clause (Section 6.5), and querying the Prolog interpreter triggers backward chaining automatically.

Prolog Example: Family Relationships

% Facts

parent(tom, bob).

parent(bob, ann).

% Rule

grandparent(X, Z) :- parent(X, Y), parent(Y, Z).Query: ?- grandparent(tom, ann).

Prolog backward-chains:

. Goal: grandparent(tom, ann)

. Matches rule: grandparent(X, Z) :- parent(X, Y), parent(Y, Z) with X=tom, Z=ann

. Subgoals: parent(tom, Y) and parent(Y, ann)

. parent(tom, Y) matches fact parent(tom, bob) → Y=bob

. parent(bob, ann) matches fact parent(bob, ann) ✓

. All subgoals satisfied → answer: TRUE

Prolog automatically finds this proof without the programmer specifying how to search.

Backtracking: When the First Path Fails

Backward chaining uses depth-first search with backtracking. If a chosen rule leads to subgoals that cannot be satisfied, the algorithm backs up and tries a different rule.

Backtracking Example

Suppose the query is flu but the patient has fever, cough but not fatigue.

Goal: flu

Try R3: possible_flu ∧ body_aches → flu

Subgoal: possible_flu

Try R2: respiratory_issue ∧ fatigue → possible_flu

Subgoal: fatigue → FAIL (not a known fact)

Backtrack: no other rules conclude possible_flu

Subgoal possible_flu FAILS

Backtrack: no other rules conclude flu

Result: flu CANNOT be proven.

ASK(KB, "flu") → UNKNOWN

The backtracking search tried every relevant rule and found no proof. This is an honest answer: the KB does not have enough information to conclude flu.

Forward vs. Backward Chaining: Side-by-Side

| Aspect | Forward Chaining | Backward Chaining |

|---|---|---|

Direction |

Facts → Conclusions |

Goal → Supporting Facts |

Approach |

Data-driven |

Goal-driven |

What it computes |

All possible conclusions |

Proof of one specific goal |

Efficiency |

May derive many irrelevant facts |

Only explores relevant paths |

Memory |

Grows as facts accumulate |

Grows as subgoal stack deepens |

Best when |

Many conclusions needed from same facts |

Specific question to answer |

Completeness |

Finds all entailed facts (Horn clause KBs) |

Proves specific goal if provable |

Classic systems |

OPS5, CLIPS, Rete algorithm |

Prolog, MYCIN (diagnostic mode) |

Neither strategy is universally better. The right choice depends on your use case:

-

If new sensor data arrives continuously and you want to act on all consequences: use forward chaining.

-

If a user asks a specific question and you want to answer efficiently: use backward chaining.

-

If you need to diagnose a problem from symptoms: backward chaining is often more natural (start from suspected diagnoses and check supporting evidence).

-

If you need to monitor conditions and trigger alerts: forward chaining fits naturally (trigger when conditions are met).

Consider a student registration system at a college. A student asks: "Am I eligible to graduate?"

Sketch the backward chaining process. What would be the top-level goal? What subgoals would need to be proven? What are the "base facts" at the leaves of the proof tree (e.g., "passed MATH-101 = TRUE")?

Now consider the same problem from the forward chaining perspective: the registrar’s office processes all student records nightly and flags students who have met graduation requirements. Which approach matches each scenario better, and why?

Compare forward and backward chaining on the same knowledge base.

Based on the UC Berkeley CS 188 Online Textbook by Nikhil Sharma, Josh Hug, Jacky Liang, and Henry Zhu, licensed under CC BY-SA 4.0.

This work is licensed under CC BY-SA 4.0.